See discussions, stats, and author profiles for this publication at: https://www.researchgate.net/publication/345758544

An artificial intelligence proposal to automatic teeth detection and numbering in dental bite-wing radiographs

Yasin Yasaa, Özer Çelikb, Ibrahim Sevki Bayrakdarc, Adem Pekinced, Kaan Orhane,f, Serdar Akarsug, Samet Atasoyg, Elif Bilgirc, Alper Odabasb and Ahmet Faruk Aslanb

aDepartment of Oral and Maxillofacial Radiology, Faculty of Dentistry, Ordu University, Ordu, Turkey; bDepartment of Mathematics and Computer Science, Faculty of Science, Eskisehir Osmangazi University, Eskisehir, Turkey; cDepartment of Oral and Maxillofacial Radiology, Faculty of Dentistry, Eskisehir Osmangazi University, Eskisehir, Turkey; dDepartment of Oral and Maxillofacial Radiology, Faculty of Dentistry, Karabuk University, Karabuk, Turkey; eDepartment of Oral and Maxillofacial Radiology, Faculty of Dentistry, Ankara University, Ankara, Turkey; fAnkara University Medical Design Application and Research Center (MEDITAM), Ankara, Turkey; gDepartment of Restorative Dentistry, Faculty of Dentistry, Ordu University, Ordu, Turkey

ARTICLE HISTORY

Received 20 April 2020

Revised 29 September 2020

Accepted 6 October 2020

ABSTRACT

Objectives: Radiological examination has an important place in dental practice, and it is frequently used in intraoral imaging. The correct numbering of teeth on radiographs is a routine practice that takes time for the dentist. This study aimed to propose an automatic detection system for the numbering of teeth in bitewing images using a faster Region-based Convolutional Neural Networks (R-CNN) method.

Methods: The study included 1125 bite-wing radiographs of patients who attended the Faculty of Dentistry of Eskisehir Osmangazi University from 2018 to 2019. A faster R-CNN an advanced object identification method was used to identify the teeth. The confusion matrix was used as a metric and to evaluate the success of the model.

Results: The deep CNN system (CranioCatch, Eskisehir, Turkey) was used to detect and number teeth in bitewing radiographs. Of 715 teeth in 109 bite-wing images, 697 were correctly numbered in the test data set. The F1 score, precision and sensitivity were 0.9515, 0.9293 and 0.9748, respectively.

Conclusions: A CNN approach for the analysis of bitewing images shows promise for detecting and numbering teeth. This method can save dentists time by automatically preparing dental charts.

Introduction

The radiological examination has an important place in dentistry practice and is frequently used in intraoral imaging. For this purpose, periapical and bitewing films are routinely used in the clinics [1,2]. Imaging and interpretation of dental condition on radiographs are the most important steps in disease diagnosis [2]. In hospital information systems, in which almost all records are digital, physicians must main accurate dental records [3]. Depending on the experience and attentiveness of the physicians, misdiagnosis or inadequate diagnosis can occur in busy clinics. To prevent this, teeth must be correctly identified and numbered. The detection and numbering of teeth on radiographs are also important for forensic investigations [4]. Computer-aided systems have been developed to assist physicians in medical and dental imaging [3,5]. Teeth were identified from dental films by various engineering methods [6], which rely on predefined engineered feature algorithms with explicit parameters based on expert knowledge [7]. Deep learning systems can automatically learn components in data without the need for prior interpretation by human experts. Convolutional neural networks (CNNs) are the most common deep learning architectures used in medical imaging [8]. CNNs can automatically extract image features using the original pixel information and are frequently used for dental imaging [9]. In dentistry, CNNs are used clinically for caries detection [10,11], apical lesion detection [12,13], diagnosis of jaw lesion [14,15], detection of periodontal disease [16,17], classification of maxillary sinusitis [18], staging of lower third molar development [19], detection of root fracture [20], evaluation of root morphology [21], localization of cephalometric landmarks [22] and detection of atherosclerotic carotid plaques [23] Therefore, CNN-based deep learning algorithms show clinical potential. CNNs have been used to number teeth on panoramic and periapical films [9,24]. We propose an automatic detection system for numbering teeth in bitewing images using faster Region-based Convolutional Neural Networks (R-CNN) method.

Materials and methods

Patient selection

Bite-wing radiographs, used for various diagnostic purposes, were obtained from the archive of the Faculty of Dentistry of Eskisehir Osmangazi University. The data set included 1125 anonymized bite-wing radiographs from adults obtained from January 2019 to January 2020. Only high-quality bite-wing radiographs were included in the study. Radiographs with metal imposition, cone-cut, position and motion artefacts, crown, bridges or implants were excluded. The research protocol was approved by the Non-interventional Clinical Research Ethics Committee of Eskisehir Osmangazi University (decision date and number: 27 February 2020/25) and was following the principles of the Declaration of Helsinki.

Radiographic data set

Bite-wing radiographs were obtained using the Kodak CS 2200 (Carestream Dental, Atlanta, GA) 220–240-V periapical dental imaging unit with the following parameters 60 kVp, 7 mA and 0.1 s.

Image evaluation

A radiology expert (A.P.) with 9 years provided ground truth annotations for the images using Colabeler software (MacGenius, Blaze Software, Sacramento, CA). The following method was used to collect annotations: experts were asked to draw bounding boxes around all teeth and, at the same time, to provide a class label for each box with the tooth number according to World Dental Federation (Federation Dentaire Internationale (FDI)) system (13-23-33-43 (canines), 14-15-24-25-34-35-44-45 (premolars) and 16-17-18-26-27-28-36-37-38-46-47-48 (molars)).

Deep CNN architecture

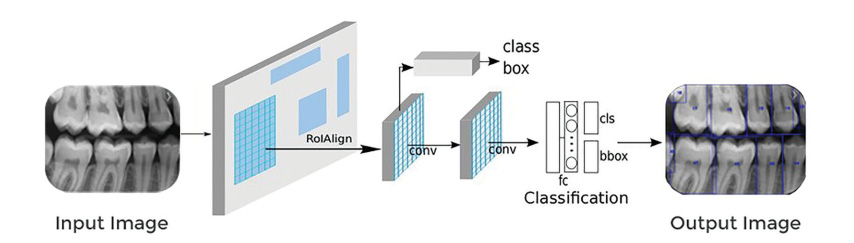

A pre-trained Google Net Inception v2 faster R-CNN network was used for preprocessing the training data sets by transfer learning. Tensorflow was used for model development (Figure 1). The architecture was initially evaluated in 2015. It featured batch normalization at the time of its introduction. Inception v2 is represented by a cyclical graphic from the input layer, and feed-forward network classifiers or regressors prevent bottlenecks in the previous structure (Inception v1). This information defines a clear direction to the flow. As it travels from entry to exit, the tensor size slowly decreases. It is also important that the width and depth are balanced within the network. For optimal performance, the width of the modules that provide depth must be adjusted correctly.

Figure 1. System architecture and pipeline of tooth detection and numbering (adapted from Gaus et al. [41]).

Increasing the width and depth increases the quality of the network structure, and the parallel processing load increases rapidly [25]. A faster R-CNN model was developed from Fast R-CNN architecture based on the R-CNN method was used for tooth detection. This model allows the extraction of feature maps from the input image using ConvNet and passes those maps through an RPN which returns object proposals. Next, the maps are classified, and the bounding boxes are predicted.

Model pipeline and training phase

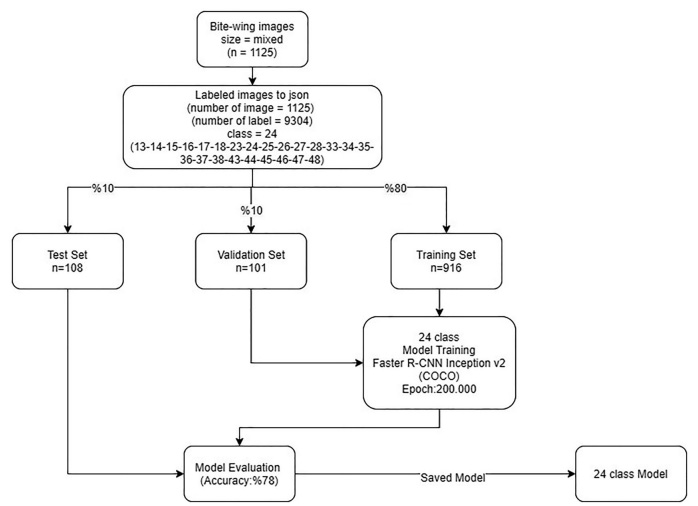

Proposed artificial intelligence (AI) model (CranioCatch, Eskisehir, Turkey) approach for tooth detection and numbering is based on a deep CNN using 200,000 epochs trained with faster R-CNN inception v2 (COCO) with a 0.0002 learning rate. The type of tooth must be specified using a separate deep CNN. The training was performed using 7000 steps on a PC with 16 GB RAM and the NVIDIA GeForce GTX 1660 TI. The training and validation data sets were used to predict and generate optimal CNN algorithm weight factors. Bite-wing images were andomly distributed into the following groups:

- Training group: 916 images;

- Testing group: 108 images;

- Validation group: 101 images.

The data from the testing group were not reused.

After the first training, the model was used to identify the presence of teeth in the following manner.

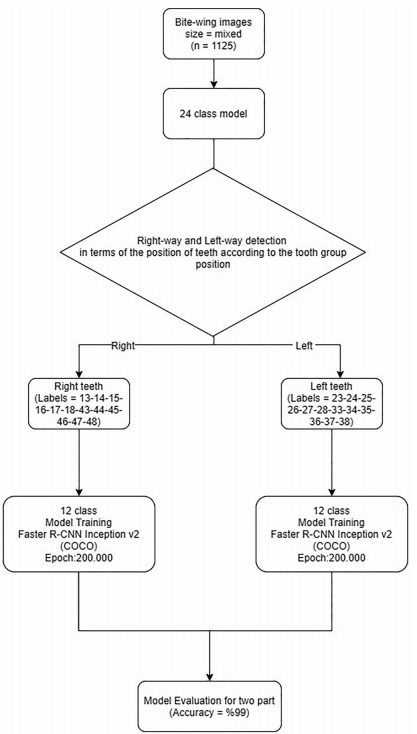

- Initially, 24 single-model trainings were performed using 916 images. A maximum of 24 classes can be detected in each radiograph (classes 13-23-33-43 (canines), 14-15-24-25-34-35-44-45 (premolars) and 16-17-18-26-27-28-36-37-38-46-47-48 (molars)). To promote success, training was performed using two models: one each for the right and left sides. The method below was used to determine whether an image was taken from the right or left.

- Images were classified as right (numbered 2_, 3_) and left (numbered 1_, 4_) in terms of the position of teeth according to the tooth group position (canine-numbered_3, premolar-numbered _4, _5, molar-numbered _6, _7, _8)) on the bitewing film. If canines and premolars were to the right of radiography, radiography was taken from the right side (2_, 3_) (right-way detection). If canines and premolars were to the left of radiography, radiography was taken from the left side (left-way detection).

Figure 2. Diagram of the developing stages of the artificial intelligence (AI) model (Craniocatch, Eskisehir, Turkey). - Two further models were trained for the right and left teeth in 12 classes. By determining which group the image belongs to using the previous model, trained models were tested (Figures 2 and 3).

Statistical analysis

The confusion matrix was used as a metric to evaluate the success of the model. This matrix is a meaningful table that summarizes the predicted and actual situations. The performance of systems is frequently assessed using the data in the matrix [26].

Metrics calculation procedure

The metrics used to evaluate the success of the model were as follows:

True positive (TP): teeth correctly detected and numbered;

False positive (FP): teeth correctly detected but incorrectly numbered;

False negative (FN): teeth incorrectly detected and numbered.

After calculating TP, FP and FN, the following metrics

were calculated:

Sensitivity (recall): TP/(TP þ FN);

Precision: TP/(TP þ FP);

F1 score: 2TP/(2TP þ FP þ FN)

Results

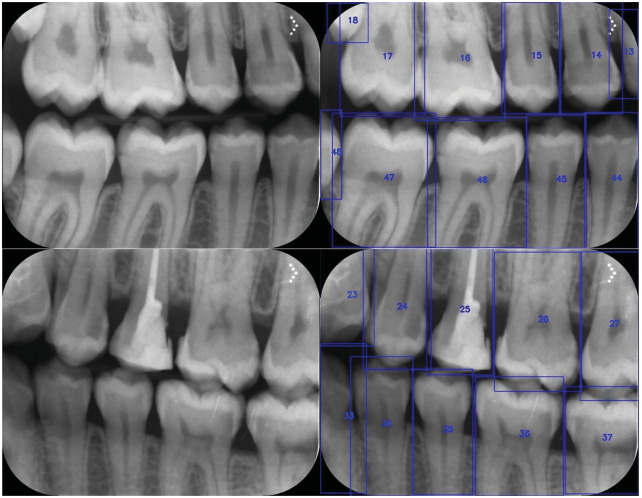

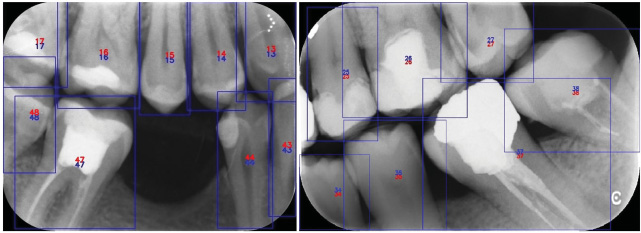

The deep CNN system showed promise for numbering teeth in bite-wing radiographs (Figures 4 and 5). The sensitivity and precision were high for numbering and detecting teeth. The sensitivity, precision and F-measure values were 0.9748, 0.9293 and 0.9515, respectively (Tables 1 and 2). Of the 108 radiographs that constituted the test group, 96 (88.9%) did not have a tooth deficiency, and 12 (11.1%) had a tooth deficiency. The number of missing teeth in these radiographs was 15.

Discussion

Bitewing radiographs are commonly used for detecting dental disease and, especially, for diagnosing approximal caries between adjacent teeth. Upper and lower teeth from the crown to the approximate level of the alveolar bone in a region of interest can be visualized in a single bite-wing radiograph. In the literature, there were numbers of the study using bite-wing radiographs [6,27–30]. As these radiographs can only show the crowns of posterior teeth, they are more appropriate for detecting and numbering teeth in bitewing films than in other dental radiographs [9].

Several studies focussed on image-processing algorithms to achieve high-accuracy classification and segmentation in dental radiographs using mathematical morphology, active contour models, level-set methods, Fourier descriptors, textures, Bayesian techniques, linear models or binary support vector machines [6,9,27–30]. However, image components are usually obtained manually using these

Figure 3. Diagram of the developing stages of the artificial intelligence (AI) model (CranioCatch, Eskisehir, Turkey).

image-enhancement algorithms. The deep learning method yields fair outcomes by automatically obtaining image features. The objects detected in an image are classified into a pre-trained network without preliminary diagnostics, as a result of processes such as various filtering and subdivision. With its direct problem-solving ability, deep learning is used extensively in the medical field, and various deep learning architectures have been developed, such as CNNs, which have high performance in image recognition tasks [31]. Deep learning methods using CNNs are a cornerstone of medical image analysis [8,32]. Such methods have also been preferred in AI studies in medical and dental radiology [5,7] and will facilitate tooth detection and numbering in dental radiographs. The aim of radiological AI studies is automated, routine, simple evaluations so that radiologists can read images faster, saving time for more omplex cases. The numbering of teeth in dental radiology is a routine evaluation that takes up time. Mahoor et al. [6] introduced a method for robust classification of premolar and molar teeth in bitewing images using Bayesian classification. They used two types of Fourier descriptors: complex coordinates signatures and centroid distances of the contours for feature description and classification. The results showed a considerable increase in performance for maxillary premolars (from 72.0% to 94.2%). They concluded that the classification of premolars is more accurate than that of molars. Lin et al. [28] used imageenhancement and tooth-segmentation techniques to classify and number teeth on bite-wing radiographs. Their system showed accuracy rates of 95.1% and 98.0% for classification and numbering, respectively [28]. Ronneberger et al. [33] designed a U-Net architecture for bite-wing imaging of tooth structure (enamel, dentine and pulp) segmentation. The similarity value was over 70% [33,34]. Eun and Kim [35] used a CNN model to classify each tooth proposal into a tooth or a non-tooth in periapical radiographs. In their method, the tooth was marked using oriented bounding boxes. The oriented tooth proposals method showed much higher performance than did the sliding window technique [35]. Zhang et al. [36] applied CNN for tooth detection and classification in dental periapical radiographs. Their method achieved very high precision and recall rates for 32 teeth positions [36]. Tuzoff et al. [24] used the VGG-16 Net, a base CNN method using a regional proposal network for object detection, to detect and number teeth on panoramic radiographs for automatic dental charting; the accuracy was >99% [24]. Oktay [37] used a CNN with a modified version of the Alex net architecture to perform multi-class classification; the accuracy was >90% [37]. Miki et al. [4] evaluated a method to classify tooth type on cone-beam CT images using a deep CNN. The accuracy was up to 91.0% [4]. Silva et al. [38] presented a preliminary application of the MASK recurrent CNN to demonstrate the utility of a deep learning method in panoramic image segmentation. The accuracy, specificity, precision, recall and F-score values were 92%, 96%, 84%, 76% and 79%, respectively. Therefore, mask R-CNN yielded few false positives and false negatives using a small number of training images. Wirtz et al. [39] assessed automatic tooth segmentation in panoramic images using a coupled shape model with a neural network. This model ensures the segmentation of the tooth region concerning position and scale; the average precision and recall values were found to be 0.790 and 0.827, respectively [40]. Lee et al. [40] performed a fully deep CNN model purposed propose a more refined, fully deep learning method using a fine-tuned, fully deep, a mask R-CNN for automated tooth segmentation of dental panoramic images. The precision, recall F1 score and mean IoU value were 0.858, 0.893, 0.875 and 0.879, respectively. That method showed better performance than those of Silva et al. [38] and Wirtz et al. [39]. The performance for incisors and canine teeth was better than for other tooth types. Previous studies using deep learning methods were based on panoramic and periapical radiographs. To our knowledge, no study has evaluated tooth detection or numbering in bite-wing radiographs using a deep learning method based on R-CNN. Our samples included 1125 bite-wing radiographs. The proposed model had high sensitivity and precision rates. The sensitivity, precision and F-measure values were 0.9748,

Figure 4. The numbering of teeth in bite-wing radiographs using a deep convolutional neural network system

Figure 5. The numbering of missing and restored teeth in bite-wing radiographs using a deep convolutional neural network system

Table 1. The number of teeth detected and numbered correctly and incorrectly by the artificial intelligence (AI) models

| Single model (24 classes) | Second model (12 classes) | |

| True positives (TP) | 591 | 697 |

| False positives (FP) | 89 | 53 |

| False negatives (FN) | 99 | 18 |

Table 2. AI model estimation performance measures using a confusion matrix.

| Measure | Value | Derivations |

| Sensitivity (recall) | 0.9748 | TP/(TP þ FN) |

| Precision | 0.9293 | TP/(TP þ FP) |

| F1 score | 0.9515 | 2TP/(2TP þ FP þ FN) |

0.9293 and 0.9515, respectively. The samples lacked crowns, bridges, implants and implant-supported restorations; also, 12 (11.1%) radiographs had tooth deficiency. Radiographs with primary teeth were excluded, and only radiographs obtained from adults were used. These limitations should be the focus of further studies.

Conclusions

A CNN approach for the analysis of bite-wing images holds promise for detecting and numbering teeth. This method can save time by enabling automatic preparation of dental charts and electronic dental records.

Ethical approval

All procedures performed in studies involving human participants were following the ethical standards of the institutional and/or national research committee and with the 1964 Helsinki declaration and its later amendments or comparable ethical standards.

Informed consent

Additional informed consent was obtained from all individual participants included in the study.

Disclosure statement

The authors declare that they have no conflict of interest.

ORCID

Kaan Orhan http://orcid.org/0000-0001-6768-0176

References

[1] Chan M, Dadul T, Langlais R, et al. Accuracy of extraoral bitewing radiography in detecting proximal caries and crestal bone loss. J Am Dent Assoc. 2018;149(1):51–58.

[2] Vandenberghe B, Jacobs R, Bosmans H. Modern dental imaging: a review of the current technology and clinical applications in dental practice. Eur Radiol. 2010;20(11):2637–2655.

[3] Suzuki K. Overview of deep learning in medical imaging. Radiol Phys Technol. 2017;10(3):257–273.

[4] Miki Y, Muramatsu C, Hayashi T, et al. Classification of teeth in cone-beam CT using deep convolutional neural network. Comput Biol Med. 2017;80:24–29.

[5] Hung K, Montalvao C, Tanaka R, et al. The use and performance of artificial intelligence applications in dental and maxillofacial radiology: a systematic review. Dentomaxillofac Radiol. 2020; 49(1):20190107.

[6] Mahoor MH, Abdel-Mottaleb M.Classification and numbering of teeth in dental bitewing images. Pattern Recognit. 2005;38(4): 577–586. doi:10.1016/j.patcog.2004.08.012.

[7] Hosny A, Parmar C, Quackenbush J, et al. Artificial intelligence in radiology. Nat Rev Cancer. 2018;18(8):500–510.

[8] Litjens G, Kooi T, Bejnordi BE, et al. A survey on deep learning in medical image analysis. arXiv preprint arXiv:1702.05747; 2017.

[9] Chen H, Zhang K, Lyu P, et al. A deep learning approach to automatic teeth detection and numbering based on object detection in dental periapical films. Sci Rep. 2019;9(1):3840.

[10] Devito KL, de Souza Barbosa F, Felippe Filho WN. An artificial multilayer perceptron neural network for diagnosis of proximal dental caries. Oral Surg Oral Med Oral Pathol Oral Radiol Endod. 2008;106(6):879–884.

[11] Valizadeh S, Goodini M, Ehsani S, et al. Designing of a computer software for detection of approximal caries in posterior teeth. Iran J Radiol. 2015;12(4):e16242.

[12] Ekert T, Krois J, Meinhold L, et al. Deep learning for the radiographic detection of apical lesions. J Endod. 2019;45(7): 917–922.e5.

[13] Orhan K, Bayrakdar IS, Ezhov M, et al. Evaluation of artificial intelligence for detecting periapical pathosis on cone-beam computed tomography scans. Int Endod J. 2020;53(5):680–689.

[14] Ariji Y, Yanashita Y, Kutsuna S, et al. Automatic detection and classification of radiolucent lesions in the mandible on panoramic radiographs using a deep learning object detection technique. Oral Surg Oral Med Oral Pathol Oral Radiol. 2019;128(4): 424–430.

[15] Lee JH, Kim DH, Jeong SN. Diagnosis of cystic lesions using panoramic and cone beam computed tomographic images based on deep learning neural network. Oral Dis. 2020;26(1):

152–158.

[16] Lee JH, Kim DH, Jeong SN, et al. Diagnosis and prediction of periodontally compromised teeth using a deep learning-based convolutional neural network algorithm. J Periodontal Implant Sci. 2018;48(2):114–123.

[17] Krois J, Ekert T, Meinhold L, et al. Deep learning for the radiographic detection of periodontal bone loss. Sci Rep. 2019;9(1): 8495.

[18] Murata M, Ariji Y, Ohashi Y, et al. Deep-learning classification using convolutional neural network for evaluation of maxillary sinusitis on panoramic radiography. Oral Radiol. 2019;35(3):

301–307.

[19] De Tobel J, Radesh P, Vandermeulen D, et al. An automated technique to stage lower third molar development on panoramic radiographs for age estimation: a pilot study. J Forensic

Odontostomatol. 2017;35(2):42–54.

[20] Fukuda M, Inamoto K, Shibata N, et al. Evaluation of an artificial intelligence system for detecting vertical root fracture on panoramic radiography. Oral Radiol. 2020;36(4):337–343.

[21] Hiraiwa T, Ariji Y, Fukuda M, et al. A deep-learning artificial intelligence system for assessment of root morphology of the mandibular first molar on panoramic radiography. Dentomaxillofac Radiol. 2019;48(3):20180218.

[22] Kunz F, Stellzig-Eisenhauer A, Zeman F, et al. Artificial intelligence in orthodontics: evaluation of a fully automated cephalometric analysis using a customized convolutional neural network. J Orofac Orthop. 2020;81(1):52–68.

[23] Kats L, Vered M, Zlotogorski-Hurvitz A, et al. Atherosclerotic carotid plaque on panoramic radiographs: neural network detection. Int J Comput Dent. 2019;22(2):163–169.

[24] Tuzoff DV, Tuzova LN, Bornstein MM, et al. Tooth detection and numbering in panoramic radiographs using convolutional neural networks. Dentomaxillofac Radiol. 2019; 48(4):20180051.

[25] Szegedy C, Vanhoucke V, Ioffe S, et al. Rethinking the inception architecture for computer vision. Paper presented at Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition; 2016. p. 2818–2826.

[26] Provost F, Kohavi R. Glossary of terms. Mach Learn. 1998;30: 271–274.

[27] Said EH, Nassar DEM, Fahmy G, et al. Teeth segmentation in digitized dental X-ray films using mathematical morphology. IEEE Trans Inform Forensic Secur. 2006;1(2):178–189.

[28] Lin P, Lai Y, Huang P. An effective classification and numbering system for dental bitewing radiographs using teeth region and contour information. Pattern Recognit. 2010;43(4):

1380–1392.

[29] Yuniarti A, Nugroho AS, Amaliah B, et al. Classification, and numbering of dental radiographs for an automated human identification system. TELKOMNIKA. 2012;10(1):137.

[30] Rad AE, Rahim MSM, Norouzi A. Digital dental X-ray image segmentation and feature extraction. TELKOMNIKA. 2013;11(6): 3109–3114.

[31] Yasaka K, Akai H, Kunimatsu A, et al. Deep learning with convolutional neural network in radiology. Jpn J Radiol. 2018;36(4): 257–272.

[32] Li Z, Zhang X, Muller H, et al. Large-scale retrieval for medical € image analytics: a comprehensive review. Med Image Anal. 2018; 43:66–84.

[33] Ronneberger O, Fischer P, Brox T. U-net: convolutional networks for biomedical image segmentation. International Conference on Medical Image Computing and Computer-Assisted Intervention. Springer; 2015. p. 234–241.

[34] Schwendicke F, Golla T, Dreher M, et al. Convolutional neural networks for dental image diagnostics: a scoping review. J Dent. 2019;91:103226.

[35] Eun H, Kim C. Oriented tooth localization for periapical dental Xray images via convolutional neural network. Asia-Pacific Signal and Information Processing Association Annual Summit and Conference (APSIPA), IEEE; 2016. p. 1–7.

[36] Zhang K, Wu J, Chen H, et al. An effective teeth recognition method using label tree with cascade network structure. Comput Med Imaging Graph. 2018;68:61–70.

[37] Oktay AB. Tooth Detection with Convolutional Neural Networks, Medical Technologies National Congress (TIPTEKNO); IEEE; 2017. p. 1–4.

[38] Silva G, Oliveira L, Pithon M. Automatic segmenting teeth in Xray images: trends, a novel data set, benchmarking and future perspectives. Expert Syst Appl. 2018;107:15–31.

[39] Wirtz A, Mirashi SG, Wesarg S. Automatic teeth segmentation in panoramic X-ray images using a coupled shape model in combination with a neural network. International Conference on Medical Image Computing and Computer-Assisted Intervention. Springer; 2018. p. 712–719.

[40] Lee JH, Han SS, Kim YH, et al. Application of a fully deep convolutional neural network to the automation of tooth segmentationon panoramic radiographs. Oral Surg Oral Med Oral Pathol Oral Radiol. 2020;129(6):635–642.

[41] Gaus YFA, N. Bhowmik S, Akc¸ay PM, et al. Evaluation of a dual convolutional neural network architecture for object-wise anomaly detection in cluttered X-ray security imagery. 2019 Internationa

Comments

Expressions belong to readers / commenters. Sanal Yazılım Ltd. or drdentes.com.tr is not responsible for these reviews.